Yahoo Finance Data Works Again With Crumb

Retrieving historical stock prices from Yahoo Finance with no API

thirty Jul 2017 · 5 min read [ quant ]

Yahoo Finance has long been an splendid complimentary financial resources with a wealth of data and a convenient API, allowing open source programming libraries to access stock data. But not whatsoever more. Every bit of May 2017, they have discontinued their API, probably as a result of Yahoo's awaiting acquisition by Verizon. This means that splendid tools like pandas-datareader are now broken, much to the dismay of many amateur algorithmic traders or analysts. It turns out that in that location is a rather hackish workaround which allows us to download the data as CSV (i.e spreadsheet) files, which of course can and so be read into excel, pandas dataframes etc.

Just a legal disclaimer. This method involves making a big number of requests on Yahoo Finance, which may 'look like' a DDOS. Clearly, I am not trying to conduct a DDOS – I am merely trying to parse data for educational purposes. I am not responsible for how you use the methods demonstrated in this post.

Update as of ten/2/18: at that place is now a library on GitHub that puts this post to shame, with a direct pandas-datareader interface. I volition leave this mail service upwardly for legacy, but for any serious implementations, I wholeheartedly recommend the aforementioned tool.

Update as of 20/five/18: it seems that fix-yahoo-finance is becoming very inconsistent. So I guess this post does have some utility after all!

Update equally of 9/1/20: looking back on this mail, I don't recall my solution is very good. A much better fashion would be to use requests directly and parse the csv bytes, instead of physically downloading files moving them.

Overview

Although the API doesn't piece of work, you can still manually download CSV files containing historical price data for a given ticker. To practise then, y'all get to finance.yahoo.com, and enter a ticker in the search. I entered 'AAPL'.

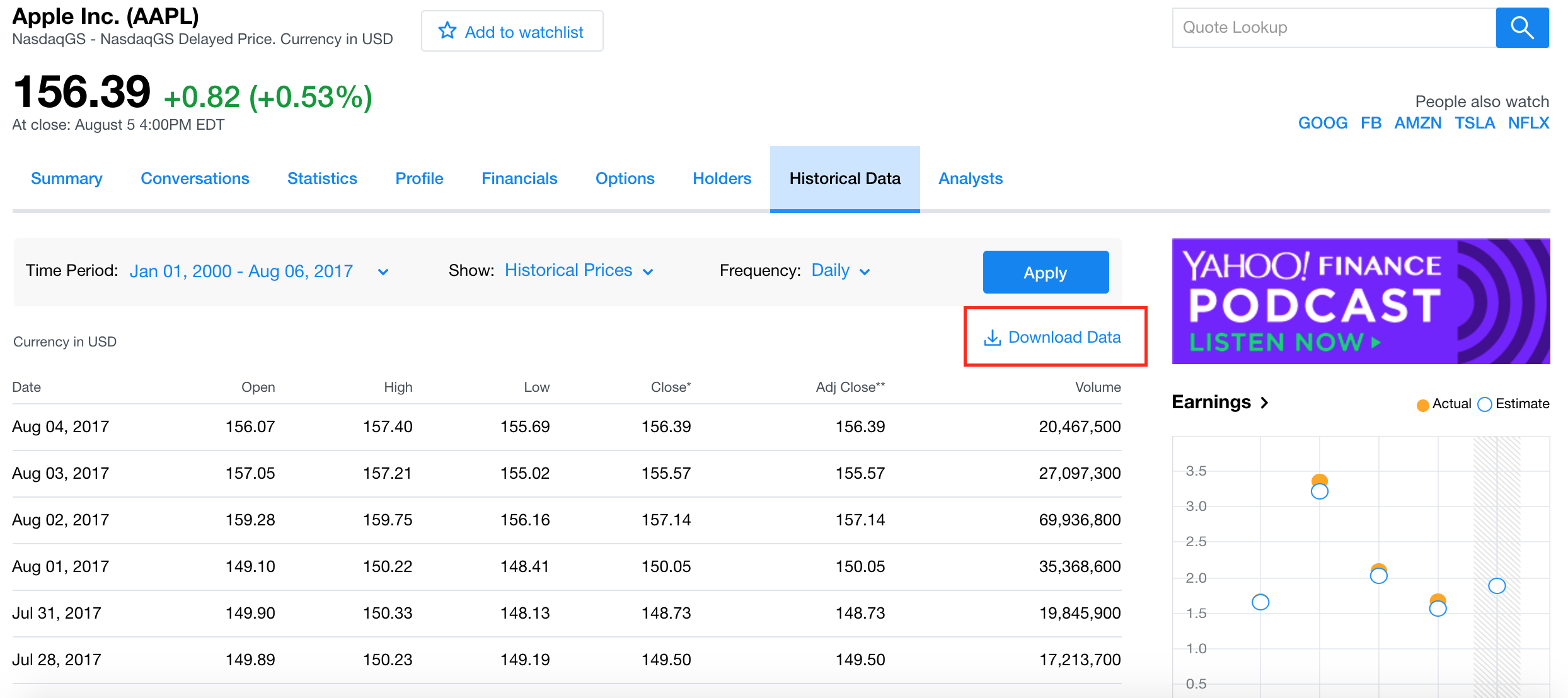

Afterward navigating to the Historical Data tab, you will end upward with a screen like this:

Afterward editing the fourth dimension flow and applying information technology, you lot can click the Download Information push button (which I emphasised in red above), and a CSV file will promptly get-go downloading on your browser. But to manually exercise this for 3000 stocks, as I would have to do for my MachineLearningStocks project, would be tantamount to water torture. I thus needed a good way of automating this procedure, and luckily I institute one.

The method

The Download Data button is actually a hyperlink, so I decided to accept a quick cheque as to the url of this link. If you right click a link in chrome, there is an option to 'Copy Link Address'. Doing so for AAPL with a time flow of Jan 01 2001 – Aug 06 2017, the link is:

https://query1.finance.yahoo.com/v7/finance/download/AAPL? period1=946656000&period2=1501948800 &interval=1d&events=history&crumb=BkT/GAawAXc Really, the structure of this url is all that one needs to crack the problem of automation. I don't know what time system yahoo finance uses internally, but it's a pretty fair judge that 946656000 corresponds to Jan 01 2001, and likewise that 1501948800 corresponds to Aug 06 2017.

I tried only changing 'AAPL' to 'GOOG' in the to a higher place url and inbound it onto chrome, and suddenly GOOG.csv had downloaded onto my machine. I did some earthworks equally to what the 'crumb' is, and to my knowledge information technology is some sort of API token / cookie which will well-nigh likely be unlike for you lot.

In any case, I figured that I had basically solved the issue at this point, so the rest was a affair of coding it upwardly in python.

Implementing the solution in python

To interact with my browser (Google Chrome), I used the webbrowser library in python. Information technology is extremely intuitive to utilize: opening a URl is equally simple as

webbrowser . open ( 'https://world wide web.google.com' ) I wanted to download a list of tickers that I had saved in a text file called russell3000.txt. To read this into a python list, we tin can use an elegant list comprehension:

russell_tickers = [ line . split ( ' \n ' )[ 0 ]. lower () for line in open ( 'russell3000.txt' )] For each ticker, we will want to open the URL as specified earlier. The time catamenia will exist the aforementioned in all of them – January 01 2001 to Aug 06 2017. The lawmaking for this is as follows:

import webbrowser for ticker in tickers : link = ( "https://query1.finance.yahoo.com/v7/finance/download" "/{}?period1=946656000&period2=1501948800&interval=1d" "&events=history&nibble=BkT/GAawAXc" . format ( ticker )) webbrowser . open ( link ) And that's information technology. This volition download all of the CSV files direct to your downloads folder.

Or will it? I have found the hard style that web-related code often runs into errors regarding the web queries – often, pages that you wait to exist accept been taken downward or corrupted etc. When webbrowser tries to open this URL, it will run into an error, but instead of continuing with the rest of the tickers, it'll merely surrender. The way around this is to utilise python's exception handling:

for ticker in tickers : try : link = ( "https://query1.finance.yahoo.com/v7/finance/" "download/{}?period1=946656000&" "period2=1501948800&interval=1d&events=history" "&crumb=BkT/GAawAXc" . format ( ticker )) webbrowser . open ( link ) except Exception every bit e : print ( ticker , str ( e )) This way, if there is ever an error, the code volition just impress the ticker and the mistake, then behave on. In principle, we are finished. Yet, it is a chip annoying that all of these CSVs are in the downloads folder, rather than the directory for my project. I wrote a few lines of python to fix this:

import os # Change these equally appropriate project_path = "/Users/Robert/Projection/" download_path = '/Users/Robert/Downloads/' # Look at the contents of your download folder for particular in os . listdir ( download_path ): # If the file is a csv, move it to the project folder if item [ - iv :] == '.csv' : impress ( download_path + item ) print ( project_path + particular ) bone . rename ( download_path + item , project_path + item ) For AAPL.csv, the code would impress:

/Users/Robert/Downloads/AAPL.csv /Users/Robert/Project/AAPL.csv and the file would be moved from the former location to the latter. Note that it will as well motion any other CSVs, simply I didn't need to fix this every bit I don't normally take CSVs in my downloads.

Conclusion

Information technology is ever a nice feeling when you can use some python to salve you lot hours of manual labour, and this was certainly the case hither. Using this code, one can automate the downloading of a large number of datafiles which contain historical stock prices. This was a very important aspect of my MachineLearningStocks project, equally information technology is meaningless to try to learn from data without having the data.

As is ofttimes the case, the code I've showed here only scratches the surface. You lot will find that some of the CSVs didn't really download, or they are empty. Thus, to increment the quality of your training data, some troubleshooting is in order. Some of the improvements I've made:

- troubleshoot non-alphabet characters in the ticker names

- employ Quandl, and its python API, to download data that Yahoo Finance doesn't have

- apply pandas-datareader to download data from Google Finance, if both Yahoo and Quandl don't have the data

Yahoo Finance Data Works Again With Crumb

Source: https://reasonabledeviations.com/2017/07/30/yahoo-historical-prices/

0 Response to "Yahoo Finance Data Works Again With Crumb"

Post a Comment